… is the question researchers asked in a recent paper (submitted, not yet peer-reviewed and published, it seems).

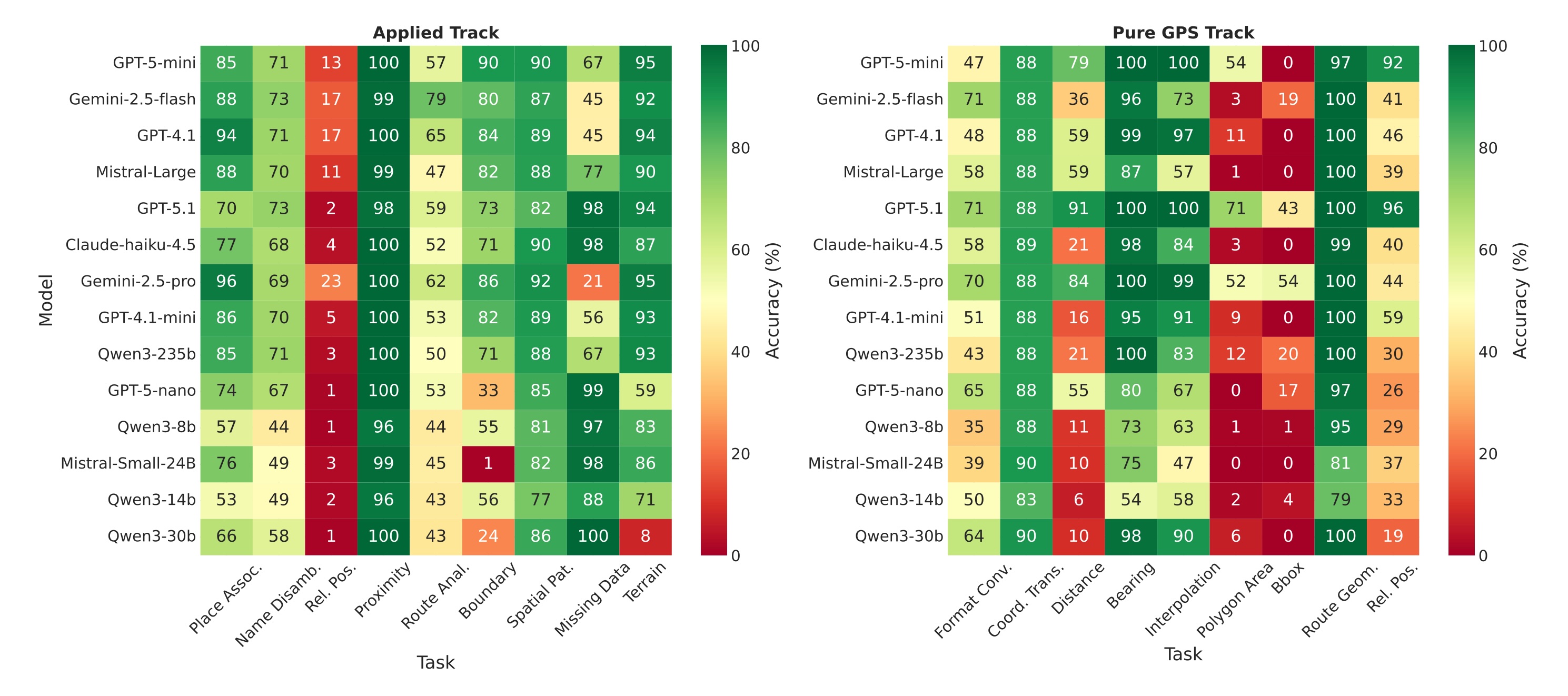

The team around Thinh Hung Truong created GPSBench, a benchmark to evaluate the ability of LLMs1 to understand and work with coordinates. The benchmarks contains 57,800 samples across 17 tasks. The researchers divide the tasks into the following two tracks:

- “Pure GPS track”2:

- Format conversion, e.g.: “Convert 32°3’9.0”S, 115°53’16.2”E to decimal”

- Coordinate transformation, e.g.: “Transform (19.37, 95.22) to Universal Transverse Mercator (UTM)”

- Distance calculation, e.g.: “Distance from (-2.13, -47.56) to (-22.63, -47.05)?”

- Bearing computation, e.g.: “Bearing from (49.28, -123.13) to (5.89, 5.68)?”

- Coordinate interpolation, e.g.: “50% along (29.93, 117.95) to (-6.99, 106.55)?”

- Area and perimeter, e.g.: “Area of polygon [(-2.85, 33.08), (21.15, 72.96), (29.35, 105.89)]?”

- Bounding box, e.g.: “Center of [(-2.85, 33.08), (21.15, 72.96), (53.60, 24.75), …]?”

- Route geometry, e.g.: “Simplify 12-point path using the Ramer–Douglas–Peucker algorithm”

- Relative position (coordinate-based), e.g.: “Direction from (40.25, -8.39) to (0.06, 34.29)?”

- “Applied track”:

- Place association, e.g.: “City at (41.12, -8.65)?”

- Name disambiguation, e.g.: “4 cities named “Nawābganj”. Which at (26.93, 81.20)?”

- Relative position, e.g.: “Mikkeli to Sosnovka?”

- Proximity, e.g.: “Closest to Titirangi: Elsdorf, Itatim, Murray, Cianjur?”

- Route analysis, e.g.: “Canberra on Gröbenzell→Dois Irmãos?”

- Spatial patterns, e.g.: “Outlier (by distance): Mornington, Ringwood, Caroline Springs, Ballajura, Sonneberg?”

- Boundary analysis, e.g.: “Group by continent: Corroios, Wimbledon, Toms River, Santa Ana”

- Terrain classification, e.g.: “Terrain at (-27.47, 153.03)?”

The GPSBench repo contains the dataset and evaluation code to analyse LLMs’ performance on the benchmark. Supported LLM providers include OpenAI, Anthropic, Google, and providers accessible through OpenRouter.

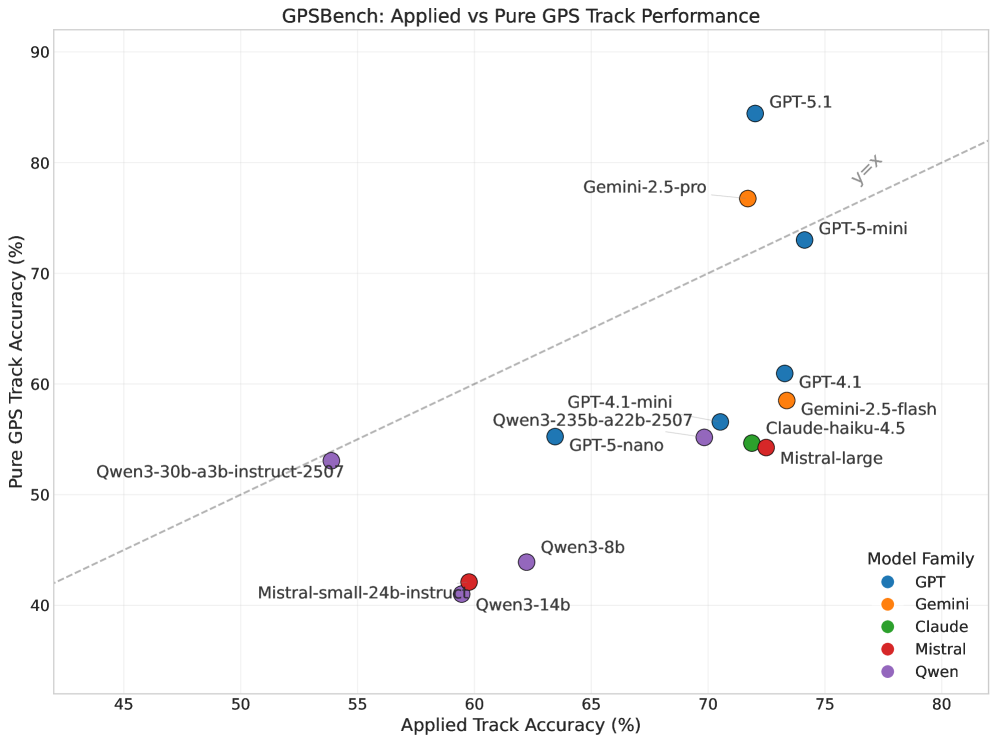

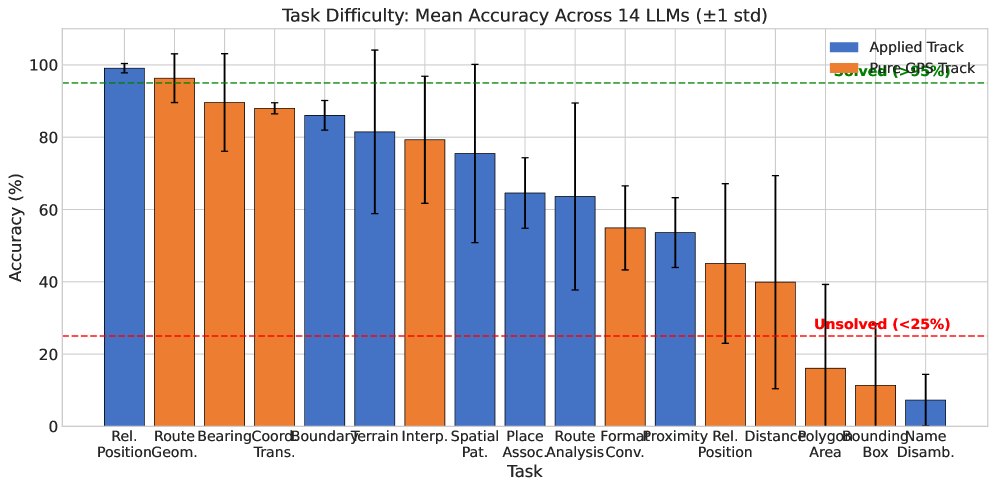

The summary by the research team states the following (note that the paper is not yet peer-reviewed, so take this with a grain of salt):

[W]e evaluate 14 state-of-the-art LLMs and find that GPS reasoning remains challenging, with substantial variation across tasks: models are generally more reliable at real-world geographic reasoning than at geometric computations. Geographic knowledge degrades hierarchically, with strong country-level performance but weak city-level localization, while robustness to coordinate noise suggests genuine coordinate understanding rather than memorization.